Due to reader request, we’ve written up a guide on how to configure AMD NVMe RAID and then compared the performance of different NVMe RAID configurations against a single SATA SSD.

The objective is to cover how to configure AMD RAID, show you some benchmarks and then summarise at the end to help you decide if this storage approach is likely to benefit your needs. All of the solid state storage devices used in this project were supplied by ADATA and were brand new with no prior use or wear. I’d also like to thank Jack from ASUS Australia in particular for his support from the very start of the project and through the testing.

When you buy a modern system it will almost always come with an SSD. All SSDs are significantly faster than hard drives with two main types available, SATA and M.2 NVMe. The legacy mSATA is still available but has been replaced by the M.2 socket and the mSATA socket is seldom found on modern day motherboards.

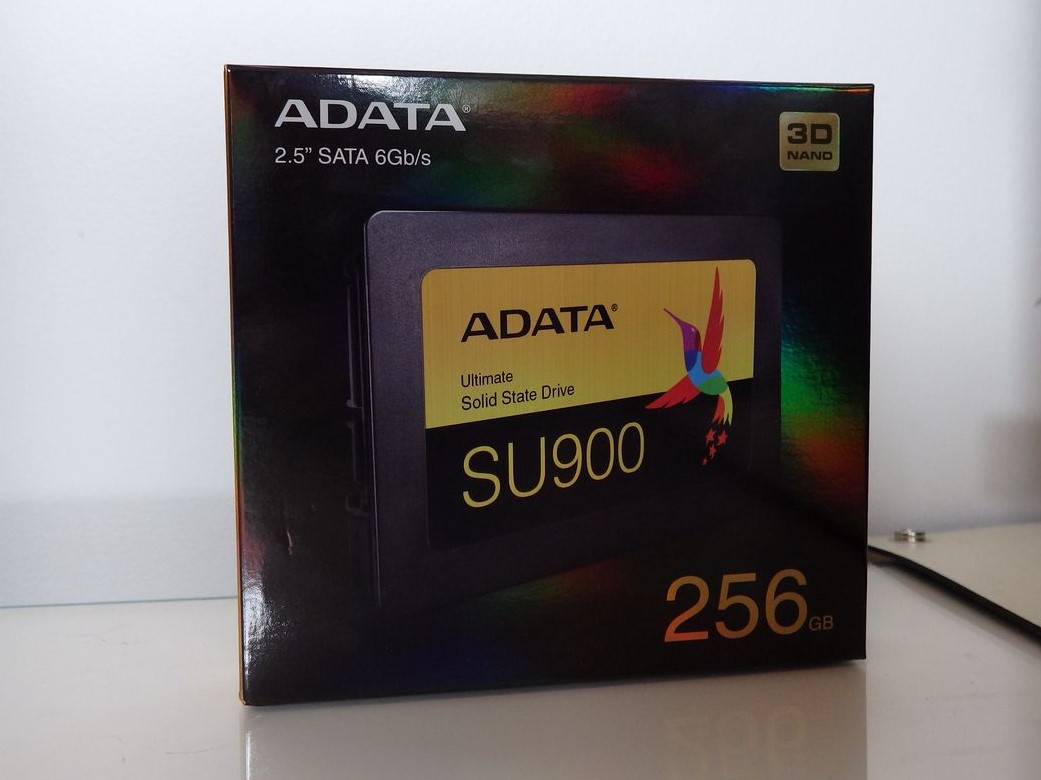

SATA SSDs (regardless of their connector) use the same interface as mechanical hard drives and top out at transfer rates of about 550MB/s. SATA SSDs are available in a 2.5″ form factor like the SU900 drive that you’ll meet in a minute or in an M.2 configuration. NVMe drives transfer their data over PCIe lanes from either the CPU or the motherboard chipset and can run at much faster speeds than the alternative SATA technology.

So we have ascertained that SATA SSDs are fast and can hit speeds up to about 550MB/s, with NVMe drives even faster again hitting speeds around the 3GB/s mark. The next step up after this is RAMDISK where volatile system memory is used but you still have to load the drive image into memory from a non-volatile location on boot. RAMDISK capacities are limited and the cost per GB is prohibitive for large scale uses. This is where the performance benefits of RAID might give power users or enthusiasts the storage speed edge they are looking for.

What is RAID?

RAID is best described at Wikipedia here but to cherry pick the relevant points:

“RAID (Redundant Array of Inexpensive Disks or Drives, or Redundant Array of Independent Disks) is a data storage virtualization technology that combines multiple physical disk drive components into one or more logical units for the purposes of data redundancy, performance improvement, or both…. Data is distributed across the drives in one of several ways, referred to as RAID levels, depending on the required level of redundancy and performance.”

The main implementations of RAID are:

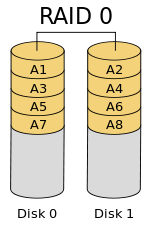

- RAID 0 – block level striping across all physical disks in the array without redundancy or parity.

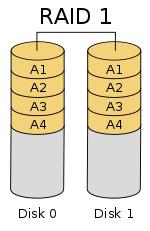

- RAID 1 – data is mirrored across two physical disks with full redundancy. This approach does not use striping or parity.

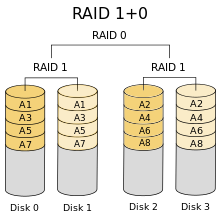

- RAID 10 – a RAID 0 array of nested RAID 1 arrays. A minimum of 4 drives is required for RAID 10. This approach has full redundancy. I didn’t cover RAID 10 in our testing as we only had the 3 drives and the use case is very specific.

SATA hard drives and SSDs have been able to be configured in RAID via motherboard and discrete storage controllers for decades so it isn’t a new concept. Sometimes system builders will use RAID for speed, sometimes for redundancy and sometimes for both. Motherboards typically have between 4 and 8 SATA ports for connecting hard drives or SSDs and configuring a RAID array is generally very simple so long as all the drives are the same capacity and speed.

This is a little more complicated for NVMe RAID due to the fact that each storage device needs PCIe lanes and these can be shared with graphics cards, networking devices, USB and other storage controllers.

Testing AMD NVMe RAID was something we had wanted to do for a while with the Threadripper platform due to the large number of PCIe lanes available from the CPU. We also had some reader questions about how well NVMe RAID scales given that these drives already operate at very high (relatively speaking) speeds in a standard configuration. Another question we’ve had and also seen debated on forums was about the performance hit of RAID 1 where the drives are mirrored.

All these questions will be answered with benchmarks and some step by step instructions on how we configured the ASUS X399 ZENITH EXTREME test System with an AMD Threadripper 2950X processor. The process may differ on other motherboard but the concept should remain the same.

So, what did we test?

We tested RAID 1 and RAID 0 (first with two NVMe drives, then retested with three in the array). We also benchmarked the same SX8200 Pro NVMe drive in NVMe mode and as a single drive in RAID mode to see if the controller mode made any difference to performance. The SU900 SATA SSD was tested in AHCI mode only and is used as a typical reference point for comparing the NVMe storage against the cheaper and slower SATA technology.

Testing setup

It’s a project like this that I really look forward to. I’d like to give a massive shout out to AMD, ASUS, ADATA and Thermaltake for the products that we used.

ASUS ROG X399 ZENITH EXTREME Test Rig Specification

• AMD Threadripper 2950X

• Enermax LIQTECH 240mm water cooler

• 32GB (4x8GB) G.SKILL FLARE-X DDR4 3200

• ASUS ROG X399 Zenith Extreme Motherboard

• ASUS ROG STRIX GTX 1080Ti OC

• ADATA SU900 256GB SSD

• ADATA SX8200 Pro 256GB NVMe (3 drives in AMD NVMe RAID0)

• Seagate Firecuda 2TB 3.5″ HDD

• Corsair RM-850 PSU

• Thermaltake View71 Case

• Logitech G810 keyboard

• Razer DeathAdder Chroma Mouse

• BenQ EX3501R Monitor

The star of the show was the AMD second generation Threadripper 2950X but the ASUS X399 ZENITH EXTREME motherboard allows the 2950X to shine. Make no mistake, this motherboard is my favourite board of all time and has been since I first tested it last year; I’d be building my next system around this component if I was undertaking a personal build right now.

ADATA provided 4 SSDs for this project, 1xSATA SSD (an SX900 for a boot drive), and 3xM.2 NVMe drives for the RAID performance testing and instructional purposes. All drives are 256GB in capacity, the detailed specifications are below for reference.

XPG SX8200 Pro | ADATA SU900 |

|

| Controller | SMI | SMI 2258 |

| Performance(Max) | Read 3500MB/s , Write 3000MB/s Maximum 4K random read/write IOPS : up to 390K/380K * Performance may vary based on SSD capacity, host hardware and software, operating system, and other system variables. Detailed spec sheet | 560/525MB/s *Actual performance may vary due to available SSD capacity, system hardware and software components, and other factors Detailed spec sheet |

| Interface | PCIe Gen3x4 | SATA 6Gb/s |

| Form Factor | M.2 2280 | 2.5" |

| Storage temperature | - 40°C - 85°C | -40°C-85°C |

| Shock resistance | 1500G/0.5ms | 1500G/0.5ms |

| Available Capacities | 256GB / 512GB / 1TB / 2TB | 128GB , 256GB , 512GB , 1TB , 2TB |

| Dimensions (L x W x H) | 22 x 80 x 3.5 mm | 100.45 x 69.85 x 7mm |

| Weight | 8g / 0.28oz | 59.5g |

| Warranty | 5-year limited warranty. * The SSD is based on the TBW or Warranty period. | 5-year limited warranty * The SSD is based on the TBW or Warranty period. |

| NAND Flash | 3D TLC | 3D MLC |

| MTBF | 2,000,000 hours | 2,000,000 hours |

| Operating temperature | 0°C - 70°C | 0°C-70°C |

Finally, the Thermaltake View71 case. This case is a fantastic chassis and very easy to work with. The open design makes changing components easy, the top radiator/fan mounts are the best I’ve ever used – you can pre-fit the fans or radiator and then easily lower the bracket into place. The drive cases are removable and there is space to hang HDDs and SSDs on the back side of the motherboard tray. For the purposes of testing, I use the cages as they are easier to access. TT designed the case with ample cable management space and the panels can all be removed without tools and in seconds. The name is suitable as the case has glass panels on the top, front and both sides – the sides have swing out doors that are great. There are 4 RGB Riing fans included that add a little bling to what is already a very nice looking case. This case is another favourite here and I was keen to use it for this project.

We’ve used the ASUS STRIX GTX 1080 Ti OC in the past and with such a high end system on the table, it seemed appropriate to include that in the build. The STRIX cooler from ASUS is one of the best going around and allows gamers to really push their graphics card without the noise of a tornado coming from their rig. The GTX 1080 Ti in this build hammers out the frames when gaming but barely makes a sound. Again, this would be my first choice of graphics card cooler in a personal build.

With all of this high end kit, the project has been given every chance to deliver the best results possible for a non-overclocked system.

Can you test this with 1TB drives? Think it would be faster?

Good review

Thank you for your instruction and test. I too would like to see with 1TB. My 1950X needs this 😀

Interested in Cache settings, what would be recommended for NVME and SSD?

This is an excellent review!!! As good as it gets!!!!

Thank you!!!!

i had some problem with The central M.2 socket is under the chipset heat sink, my samsung 970 pro nvme get too hot and the machine restart……so im just using the DIM2 for my M.2 ssd.

Hi – what is your full system spec?

I’ve used this test platform in a Thermaltake View 71 case with good airflow and more recently in an In-Win 303 with less effective airflow and a RADEON VII graphics card. I haven’t had any issues with the SSDs getting hot so I’d be interested to know what else you are running with your setup.

ok i have an NZXT kraken x73 on the top of the case, 1900x processor, 4 dim ddr4 gskill trident Z rgb CL14 3200 mhz, 2 front fan noctua 140 mm industrial 3000 rpm, a 120 noctua fan for the back case, a 950 pro ssd 512, and a mechanical HDD segate 4 tb. a RX5700xt, the 970 pro m.2 nvme, a power supply Seasonic Prime titanium 1000, and the case suppresor F31 ThermalTake, my monitor an AOC E2250SWDN, AND steinberg audio card UR 22mk II, 2 speaker m-audio bx5a and a canon printer E401….thats all

I can’t see anything there that would be an issue. You should have good airflow with that fan setup and you’re not running multi GPU so I’d expect the heat to be well under control.

The DIMM.2 slot is more accessible and is also in probably the coolest part of the board.

I’ve been running this RAID setup with the ZENITH as documented in the article for about a year. I had to change the Enermax TR4 AIO cooler out because it gummed up so there’s a Noctua NH-U12S TR4-SP3 fitted now. The RAID0 array and all SSDs have been perfect without any issues at all with almost daily use.

Maybe my motherboard have an issue in that m.2 socket, I think there is a problem with that socket because when I put the m.2 it remains a bit loose until the screw is put in, it is not like the Dim2 socket that goes in tight. but i have to make a knew proof buying another m.2 and making the proof…

phil i want to configure this as good as i can….but i dont have a RAID configuration….i want to get the best of this in the bios configuration….any recomendation will be good…..and other thing….i need to change my monitor and was looking for one that have DP 1.4 and HDMI 2.0 like my video card asus rog 8c RX5700XT….. BenQ EX2780Q (27″ 144Hz IPS, 2560 x 1440)

I have found good references on this monitor, but I have seen many negative comments on the sales pages that allude that it is more advertising than anything else,

I am looking for a relation of price performance, that does not affect me much the pocket,

i wanted the RADEON VII for this setup but was imposible to me to get it……too spensive….

now im thinking on using a alpha dim2 that came with the heatsink i think it would work perfectly…

Great article, as NVME raid setup documentation is few and far between out there. We are working on a video right now basically talking about the same thing using 4 1TB Intel M.2 SSD with a controller card on an AMD x570 chipset motherboard. I got everything to work kinda of easy, but then as you mentioned I lost windows10.

I was trying to change the drivers just like you were talking about because Win would not put them back to regular drives so I could test the drive as a volume, not as raid…..plus I was testing putting making 2 drive combos and different arrays and blue screen of death hit me a bunch, finally rendering windows useless and requiring a new install.

I’m in the process of creating backups and images of windows in case this happens again and so I can continue my testing….But I wanted to mention that from my benchmarking when it was all running; it pretty much matched a lot of your data on using single NVME m.2 vs RAID, especially when they are now going at the same speed with PCIe 3.0 speeds or 4.0 if your drives are 4.0.

Just like your very well written article pokes around, I’m question the usefulness of a RAID 0 as an everyday hard drive for video editing in Davinci Resolve.

My question to you is this….When running your RAID 0 have you had any drive failures or anything happen that would sway you away from using RAID 0?

I have been running the same setup since the article went live and I haven’t had a single issue or any failed drives. The M.2 locations on the X399 Zenith are out of the hot zones so they won’t get cooked but this doesn’t make them immune to random failure. The rig is setup to boot from a 2.5″ SATA SSD so I can still try to roll-back any updates that conflict with the RAID driver and I don’t keep anything on the RAID 0 array that isn’t backed up so for me it’s low risk and low impact if I have a problem.

I haven’t had anything happen that would sway me away from AMD NVMe RAID specifically but there is one downside that I can see – upgrading. I have 3x256GB SSDs in the array on the X399 Zenith, using up all available M.2 slots. This was done as a proof of concept. If I want to upgrade it, I’ll have to buy 3 larger SSDs and rebuild the array.

All up, it works well and my top recommendation is NOT> to use it as a system drive – standard SSDs are going to be fast enough for most people as a boot drive anyway. Keep the important elements of your rig simple.

Did you configure/test TRIM in the RAID setup? I’m trying to see if it is possible to configure TRIM on NVMe AMD RAID.

Hi Sam – Good question.

When I did this project there was little detail available regarding TRIM for AMD NVMe RAID. Most of the material I found indicated that TRIM wasn’t supported back then so I didn’t configure and test that element. The array was setup per official guidelines from both AMD and ASUS to go through the recommended/supported approach and show the results that we could expect. Now that you’ve raised the question I’ll have to add it to the list of things to revisit 😉

Did you ever figure out if Trim worked in this setup? I’m finding conflicting information all over the place!